Building an agentic PM workflow: Vision and Plan

I have been seeing a lot of posts lately about PMs using AI agents to automate parts of their workflow — and many of them are reporting real productivity gains. Not just using AI to answer questions but actually shipping faster, handling more of the end-to-end workflow, and freeing up time for the work that requires human judgment.

I wanted to see for myself. More importantly, I wanted to build it myself and learn from the process. The question I kept coming back to was: where exactly do I add the most value in a product workflow, and what can I confidently hand off to agents? The answer isn't "everything" and it isn't "nothing" — it's somewhere in between, and the only way to find that line is to build the system and run it.

What I'm building

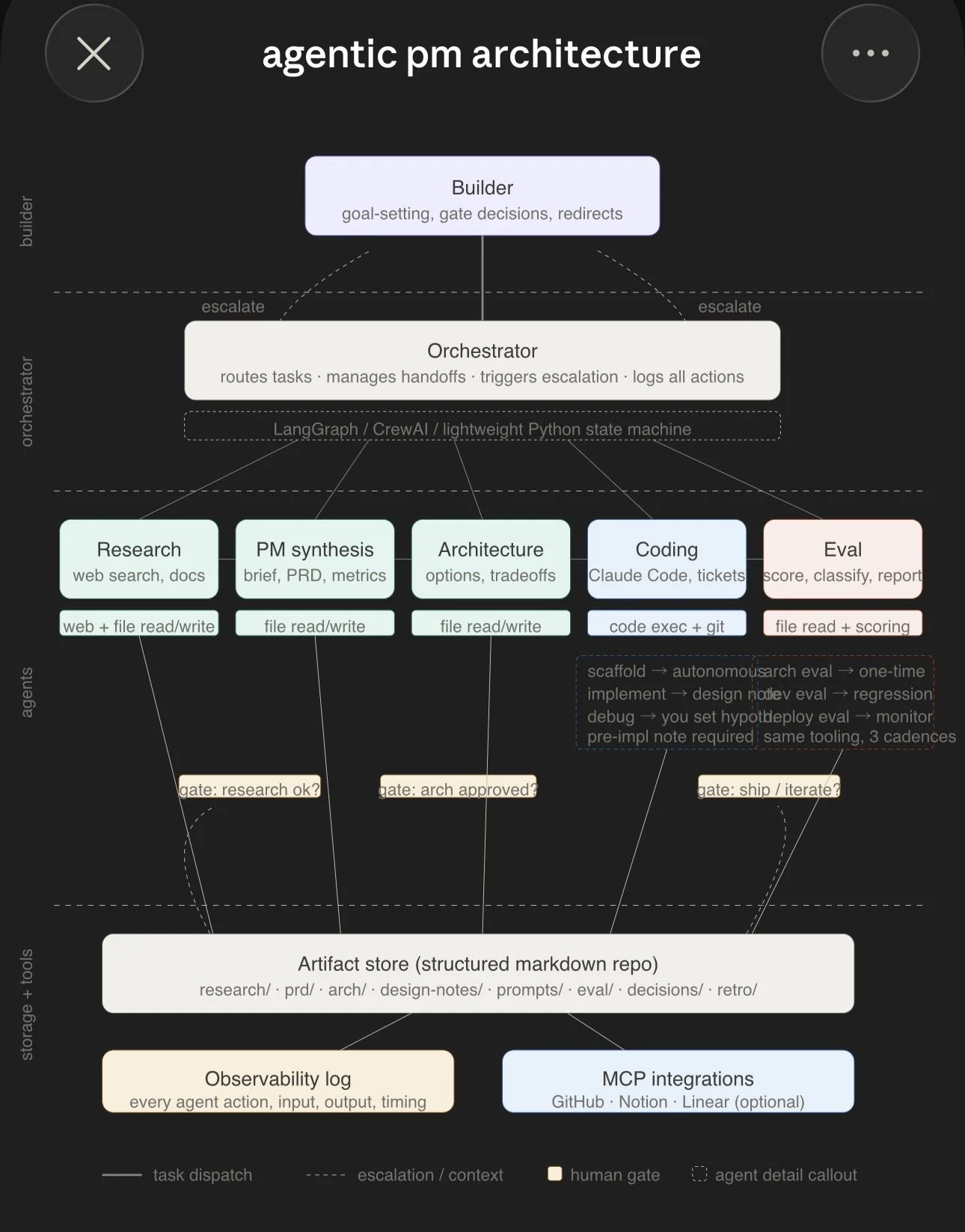

A multi-agent system that covers the end-to-end product workflow — from research to shipping. Five specialist agents, each responsible for a different stream of work, coordinated by a lightweight orchestrator.

The agents:

Research — web search, document synthesis, competitive analysis

PM synthesis — product briefs, PRDs, metrics, user journey work

Architecture — options analysis, tradeoffs, technical design

Coding — implementation via Claude Code, tickets, version control

Eval — scoring, test coverage, regression checks, quality gates

The orchestrator routes tasks between agents, manages handoffs, triggers escalation when something breaks, and logs everything.

The part that matters most: human gates

The system has three explicit points where it stops and asks for human input:

After research — is this research sufficient? Are we looking at the right things?

After architecture — is this the right design direction before we commit to building?

After eval — are we ready to ship, or do we need to iterate?

These are the moments where the cost of being wrong is highest and where human judgment — taste, context, domain intuition — adds the most value. Everything between the gates is where agents run. You set the goal, make the high-stakes calls, and let the system handle the boilerplate.

Flexibility across products

This matters because not every build is the same. One app might need heavy time spent on debugging and edge case handling — lots of cycles between the coding and eval agents. Another might need deep work on user journeys and compliance — more time in PM synthesis and architecture. A data-heavy product might need extensive research before anything else moves.

The system is designed to let you go as deep or as shallow as needed in each layer. The agents and gates are the same. The time and attention you spend at each gate shifts based on what the product demands.

I built this for PM work because that's what I know. But the underlying structure — break work into streams, match each stream to a capability, put human gates at the high-leverage decision points — can be adapted for different roles and skillsets. A developer might spend most of their time at the architecture and eval gates. A founder might focus on the research and synthesis gates. The pattern is the same even if the labels change.

How the architecture fits together

Agentic PM Architecture

The system has four layers:

Builder (you) — goal-setting, gate decisions, redirects when things go sideways.

Orchestrator — a lightweight Python state machine (starting with raw Python, introducing LangGraph after the first full loop runs). Routes tasks, manages handoffs, triggers escalation, logs every action.

Agents — five specialists, each with their own tools and model allocation. Research gets web access. Coding gets code execution and git. Eval gets file access and scoring tools. Each agent reads from and writes to a shared artifact store.

Storage and tools — a structured markdown repo on GitHub serves as the artifact store. Every research summary, product brief, architecture option, and eval result lives here in a consistent format that any agent can read. An observability log captures every agent action with inputs, outputs, and timing.

Why custom evals

One of the things I wanted to learn through this project was how evals work in practice. When I started looking at which model to assign to each agent, the natural starting point was public benchmarks — GPQA, SWE-bench, and so on.

The problem was that the benchmarks didn't map cleanly to the capabilities I needed. GPQA tests graduate-level science reasoning. That's useful, but it doesn't tell me which model is best at challenging the premise of a product idea or compressing messy research notes into a usable artifact. The streams I defined needed capabilities that no public benchmark directly measures.

So I designed a custom eval. I used a product I had previously built — a smart trash can system — as the simulation domain and ran all three models (Claude, Gemini, GPT) through each stream with scored dimensions tailored to what that stream actually requires. Things like: does the model challenge the framing or just answer within it? Can you act on the output immediately? Does it hedge appropriately on uncertain technical claims?

I'll go deeper into the eval process and results in the next post. But two findings worth previewing:

Benchmarks weren't predictive of workflow performance. The model with the highest reasoning benchmark scores didn't lead on the reasoning dimensions that mattered for PM work. Academic reasoning and product reasoning test different cognitive operations.

Each model has a default lens. One thinks primarily in compliance and business consequences. Another defaults to safety and liability framing. The third leads with architecture and abstraction. All three perspectives are correct. No single model holds all three simultaneously. That finding shaped how I think about when to use one model versus querying all three.

What's next

This is the first in a series:

Vision and plan — this post

Eval process and results — the custom eval methodology, scoring, and model allocation decisions

The build — orchestrator implementation, agent configuration, artifact store setup

Test runs — running the system on a real product build and documenting what works and what breaks

The repo is live at github.com/ssugathan/pm-os if you want to follow along.

If you've built something similar — a multi-agent workflow for product work or any other domain — I'd genuinely like to hear what you learned. What would you do differently? What broke that you didn't expect? Drop a comment or reach out!